Why Whisper Still Struggles with Australian English - and What We Did About It

By Kathy Reid · Mozilla Data Collective

Ask a Queenslander to say "no worries" into a Home Assistant Voice Preview. There's a decent chance it mishears them. Ask someone from Radelaide and things get worse. Ask anyone who grew up calling things "heaps good" and you'll start to understand that the problem isn't the speaker — it's the model.

OpenAI's Whisper is genuinely impressive. It supports 99 languages, runs locally, and powers some of the most popular open-source voice assistant stacks in the world — including faster-whisper, which sits underneath Home Assistant's local voice pipeline. But Whisper was trained on data that skews hard towards American and British English. Australian English — with its distinct vowel shifts, distinctive prosody, and accent variation across states and subcultures — is underrepresented in that training corpus. The result is a model that routinely mishears the 26 million people who call Australia home.

This post explains what we did about it at Everything Open 2026 in Canberra, and gives you everything you need to replicate the result for Australian English — or adapt the approach for any under-represented accent available in Common Voice.

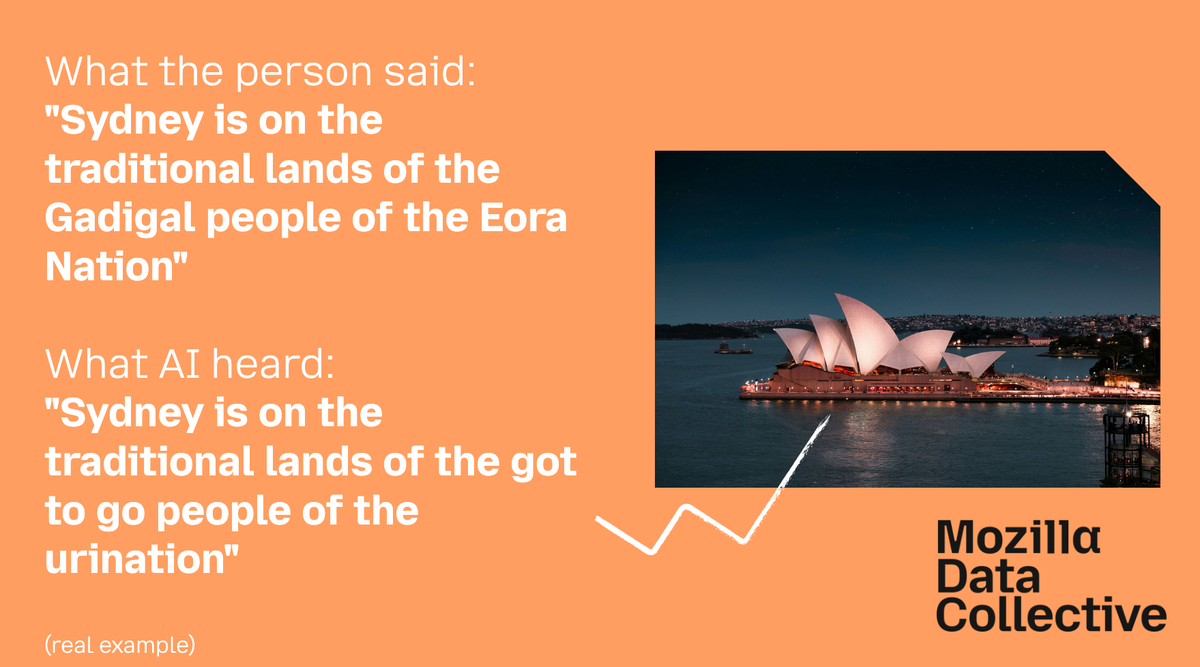

The Problem in Plain Terms

When a speech recognition model is trained, it learns to map acoustic patterns — the raw shapes of sound — onto text. That mapping is only as good as the data it's trained on. If the training data contains very few examples of a particular accent, vowel pattern, or phonological feature, the model's predictions for speakers with those characteristics will be less accurate.

For Australian English specifically, a few features create consistent failure modes in Whisper:

- Vowel shifts: Australian English has a well-documented system of vowel shifts that diverge significantly from American English. Consider for example the way an Australian-accented person says "vase" - to rhyme with "Mars". This differs from an American-accented person, who is more likely to say "vase" to rhyme with "ways" or "praise".

- High Rising Terminal (HRT): the tendency for declarative statements to end with a rising intonation, which models sometimes mis-parse as a question or misread as uncertainty.

- Regional variation: "General Australian" (the reference accent heard on the ABC) behaves differently to broad Queensland speech, South Australian speech, or the accents common in multicultural urban centres like Western Sydney - such as the emerging Multicultural Australian English (Cox & Penney, 2024)

The fix — fine-tuning — is well understood in principle. You take a trained model and continue training it on data that has a distribution that's closer to your use case. In simple terms, you're not starting from scratch; you're nudging the model's weights to pay better attention to the acoustic patterns that match the real-life context it will be deployed in.

The Stack

Our approach draws on the Mozilla.AI Speech-to-Text Finetuning Blueprint, developed by our colleague Kostis Saitas Zarkias. It's a clean, reproducible pipeline that handles data preparation, training configuration, and evaluation. We wrapped a tutorial around it, customised it for Australian-accented data, and ran it as a hands-on 100-minute workshop.

The full stack is:

| Component | What it does |

|---|---|

| Mozilla Data Collective | Data discovery, download, and licensing |

| Common Voice v24 (en-AU subset) | ~55,600 clips, ~1.92 GB, CC0 licence |

| Mozilla.AI Blueprint | Fine-tuning pipeline on top of 🤗 Transformers |

| Google Colab (free tier GPU) | Compute — no local GPU required |

| Hugging Face Hub | Model storage and sharing |

| faster-whisper | Deployment target for Home Assistant |

Everything is open source. Everything is CC0-licensed on the data side. You don't need an Anthropic API key, an OpenAI account, or a cloud GPU credit card.

The Data: Where It Came From

Since 2022, Mozilla Common Voice has allowed contributors to self-report the accent they speak with. That means there's now a growing pool of voice data tagged with granular accent metadata — and we can use that to extract accent-specific subsets for fine-tuning.

The dataset we used for the tutorial is the Common Voice v24 English – en-AU subset, curated and hosted on Mozilla Data Collective. It covers six Australian accent categories:

- Australian English

- General Australian

- South Australia

- Educated Australian Accent

- Sydney – Middle Eastern seaboard Australian

- Queenslandish

55,673 rows. Approximately 4.68 minutes of audio. CSV + MP3. CC0.

If you want to build your own accent subset from Common Voice, the repository includes a dedicated extract-from-cv-by-accent directory with the subsetting code. This is the part of the work that tends to be invisible in tutorials but is often the most practically useful — the preprocessing and data curation logic.

Running the Tutorial

The tutorial is structured around a single Google Colab notebook: EO2026_teach_whisper_to_speak_Australian.ipynb.

What you'll need before you start:

- A Mozilla Data Collective account and the en-AU dataset download (1.92 GB)

- A Hugging Face account and a fine-grained access token (

HF_TOKEN) with read/write repo permissions and inference access - A Google account to run Colab

The pipeline, in order:

- Environmental setup — install dependencies, configure Hugging Face credentials

- Data loading and preparation — convert audio to the required sample rate, reshape the dataset structure into the format expected by the Blueprint

- Fine-tuning on GPU — Colab's free T4 is enough; training runs while you have a coffee

- Evaluation — measure Word Error Rate (WER) before and after fine-tuning on held-out Australian speech

- Export to

faster-whisperformat — convert the fine-tuned model for deployment in Home Assistant

Known issue: There is a currently-unresolved memory exhaustion bug that can surface during the fine-tuning step in the Colab notebook. If you hit it, check your batch size configuration and consider reducing it - or, PRs welcome!

Why This Matters Beyond Australian English

The pattern here is completely general:

- Find an accent or dialect under-represented in Whisper's training data

- Find or build a tagged speech dataset for that accent (Common Voice has tagging for hundreds of accents globally)

- Use the same Blueprint pipeline to fine-tune

- Export and deploy

Common Voice now carries accent data for accents from South African English to Singapore English to Nigerian English to Scottish English. The methodology in this tutorial scales to all of them. If you're building a voice product for any community whose English sounds different from the American-British norm — or working in a language where the default Whisper model under-performs — this workflow is your starting point!

The Bigger Picture: Open Data as Infrastructure

The thing that makes this tutorial possible isn't the fine-tuning technique — that's been documented elsewhere. It's the availability of accent-tagged, openly licensed, ethically collected speech data in a form that's easy to discover and use.

Mozilla Data Collective is our answer to the problem of AI data being either locked up in proprietary silos or extracted from communities without consent. It's built on a model of community contribution and transparent governance, extended with better tooling for data stewardship, access control, and dataset discovery.

Every clip in the en-AU dataset was contributed by a person who chose to record it. Every accent tag was self-reported. That's what ethical AI data infrastructure looks like.

Get Involved

- Run the tutorial yourself: GitHub repo

- Get the dataset: Mozilla Data Collective

The code is Apache 2.0. The data is CC0. The methodology is reproducible. If your voice assistant mishears you, you now have the tools to fix that — and the invitation to help others do the same.

Kathy Reid is Head of Applied R&D at Mozilla Data Collective.