Fine-Tune a Speech-to-Text Model for Any Language - Including Yours

A step-by-step developer tutorial from Kostis at Mozilla Data Collective

Most speech recognition models were built with English, or a handful of well-resourced languages, in mind. If you speak Khmer, Galician, or any of the hundreds of languages underrepresented in mainstream AI, you've probably hit a wall trying to get accurate transcriptions.

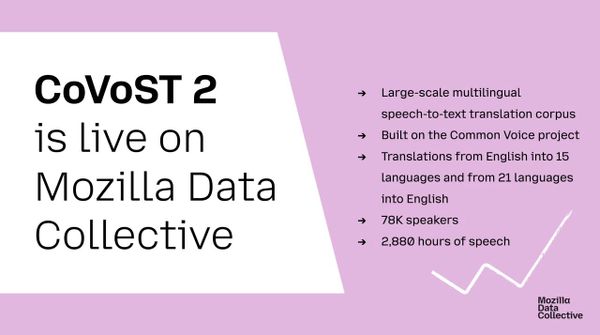

The Mozilla Data Collective (MDC) is working to change that. Our mission is a tech future that is multilingual, multicultural, and multimodal - built on the principle that communities should have genuine data agency and be the primary beneficiaries of sharing their data. The speech-to-text-finetune blueprint is a practical tool for making that a reality by enabling developers to easily fine-tune Whisper-based or MMS-based STT models.

In this tutorial, we'll walk through how to fine-tune OpenAI's Whisper model on your own language using the Mozilla Data Collective platform's datasets or your own custom audio data. Everything runs locally — even on a laptop — keeping your data private.

You can also view a video walk-through of this tutorial here.

What You'll Build

By the end of this tutorial, you'll have:

- A fine-tuned Whisper model optimised for your target language

- A local transcription app you can test against your own voice

- (Optionally) a model pushed to the Hugging Face Hub for sharing

As a concrete example of what's possible: a Galician Whisper model fine-tuned with this blueprint produces dramatically cleaner transcriptions than the base whisper-small model, correctly handling language-specific phonology that the base model garbles.

Prerequisites

- Python 3.10+

ffmpeginstalled on your system- A Mozilla Data Collective API key (free — instructions below)

- A GPU is helpful but not required for smaller models

Step 1: Clone the Repo and Install Dependencies

git clone https://github.com/Mozilla-Data-Collective/speech-to-text-finetune

cd speech-to-text-finetune

pip install -e .Then install ffmpeg:

# Ubuntu

sudo apt install ffmpeg

# macOS

brew install ffmpegStep 2: Get Your MDC API Key

The MDC platform hosts Common Voice datasets across a wide range of languages and provides a Python SDK for accessing them. To use it:

- Create a free account at https://mozilladatacollective.com/

- Generate an API key from your account dashboard

- Copy the

.envtemplate and add your key:

cp example_data/.env.example src/speech_to_text_finetune/.env

Then open the .env file and set:

MDC_API_KEY=your_api_key_here

Note: Values in this .env file will override any environment variables with the same name already set on your system.

Step 3: Choose Your Dataset

You have two options for sourcing training data.

Option A: Load via the MDC Python SDK (Recommended)

This is the easiest path. Browse datasets on the MDC platform and find a dataset for your language. Open the dataset page, click "Connect API", and copy either the "Dataset ID" or the "Dataset slug", both can be used interchangeably.

dataset_id_or_slug="khmer-asr-cultural-dataset-4e33cd05"

↑ this is your dataset_id

Important: You must accept the dataset's terms and conditions on the MDC platform before downloading via the API.

Option B: Use a Locally Downloaded Dataset

Download and extract the dataset zip from the MDC platform, then point your config at the local directory. This is useful if you're working offline or want to pre-process the data yourself.

Option C: Bring Your Own Data

You can also record and label your own custom dataset using the built-in UI:

python src/speech_to_text_finetune/make_custom_dataset_app.py

The app walks you through recording audio clips and adding transcriptions. Your dataset should produce a .csv, .tsv, or .parquet file with audio_path and transcription columns.

Step 4: Configure Your Fine-tuning Run

Create or edit a config_whisper.yaml file. Here's a minimal example using the MDC SDK path:

model_id: openai/whisper-tiny

dataset_id: cminc35no007no707hql26lzk # Your MDC dataset ID

language: Khmer

repo_name: whisper-tiny-km-finetuned

download_directory: /path/to/downloads # Optional path to download the dataset

training_hp:

push_to_hub: False

hub_private_repo: True

num_train_epochs: 10

per_device_train_batch_size: 16

learning_rate: 1e-5Choosing a model size: Start with whisper-tiny or whisper-small for faster iteration. Move to whisper-medium or whisper-large-v3-turbo once you're happy with the pipeline and have a GPU available.

Unsupported languages: If your language wasn't part of Whisper's original training data, don't give up. Use Glottolog to find the closest related language that is in Whisper's supported list, and set that as your language value. This gives the decoder more appropriate token priors. If no related language appears in Whisper's list, set language: None.

Step 5: Fine-tune

python src/speech_to_text_finetune/finetune_whisper.py

The script handles dataset loading, train/test splitting, tokenization, and training. If your dataset already defines official train and test splits (as Common Voice does), those are preserved automatically and test_size in your config is ignored.

Training progress will be logged to the console.

Step 6: Test Your Model

Once training completes, fire up the transcription app to test your fine-tuned model against your own voice or a sample audio file:

python demo/transcribe_app.py

Enter the local path to your fine-tuned model (or a Hugging Face model ID if you pushed it), record a sample, and see the transcription.

To compare your fine-tuned model side-by-side against the base model:

python demo/model_comparison_app.py

This makes it easy to see exactly where fine-tuning improved accuracy on your language's specific phonology and vocabulary.

Step 7 (Optional): Speed Up Inference with Faster-Whisper

Once you're happy with your model, you can convert it to the faster-whisper format for significantly faster inference — useful if you're deploying this in a production app or on lower-powered hardware. Check out the tutorial by Kathy from the MDC community:

tutorial-whisper-fine-tuning-australian-EO2026

Try It Without Installing Anything

Not ready to set up a local environment? You have some other instant options:

- GitHub Codespaces: Launch a ready-to-go environment with everything pre-installed to run small scale experiments in a limited resources machine.

- Google Colab: Open the fine-tuning notebook which includes a full MDC flow for Khmer as a worked example (

demo/mdc_khmer.ipynb) that can be run with free GPU access provided by Google.

What's Next?

- Discover and play with new datasets https://mozilladatacollective.com/datasets

- Share your own fine-tuned model and contribute back to the collection

- Join the conversation on Discord (

#mozilla-data-collective), Reddit, or LinkedIn

The speech-to-text-finetune blueprint is open source under the Apache 2.0 License. It was created by Mozilla.ai and adapted by the Mozilla Data Collective community.